Play Infinite Versions of AI-Generated Pong on the Go

There is currently a lot of interest in AI tools designed to help programmers write software. GitHub’s Copilot and Amazon’s CodeWhisperer apply deep-learning techniques originally developed for generating natural-language text by adapting it to generate source code. The idea is that programmers can use these tools as a kind of auto-complete on steroids, using prompts to produce chunks of code that developers can integrate into their software.

Looking at these tools, I wondered: Could we take the next step and take the human programmer

out of the loop? Could a working program be written and deployed on demand with just the touch of a button?

In my day job, I write embedded software for microcontrollers, so I immediately thought of a self-contained handheld device as a demo platform. A screen and a few controls would allow the user to request and interact with simple AI-generated software. And so was born the idea of infinite

Pong.

I chose

Pong for a number of reasons. The gameplay is simple, famously explained on Atari’s original 1972 Pong arcade cabinet in a triumph of succinctness: “Avoid missing ball for high score.” An up button and a down button is all that’s needed to play. As with many classic Atari games created in the 1970s and 1980s, Pong can be written in a relatively few lines of code, and has been implemented as a programming exercise many, many times. This means that the source-code repositories ingested as training data for the AI tools are rich in Pong examples, increasing the likelihood of getting viable results.

I used a US $6

Raspberry Pi Pico W as the core of my handheld device—its built-in wireless allows direct connectivity to cloud-based AI tools. To this I mounted a $9 Pico LCD 1.14 display module. Its 240 x 135 color pixels is ample for Pong, and the module integrates two buttons and a two-axis micro joystick.

My choice of programming language for the Pico was

MicroPython, because it is what I normally use and because it is an interpreted- language code that can be run without the need of a PC-based compiler. The AI coding tool I used was the OpenAI Codex. The OpenAI Codex can be accessed via an API that responds to queries using the Web’s HTTP format, which are straightforward to construct and send using the urequests and ujson libraries available for MicroPython. Using the OpenAI Codex API is free during the current beta period, but registration is required and queries are limited to 20 per minute—still more than enough to accommodate even the most fanatical Pong jockey.

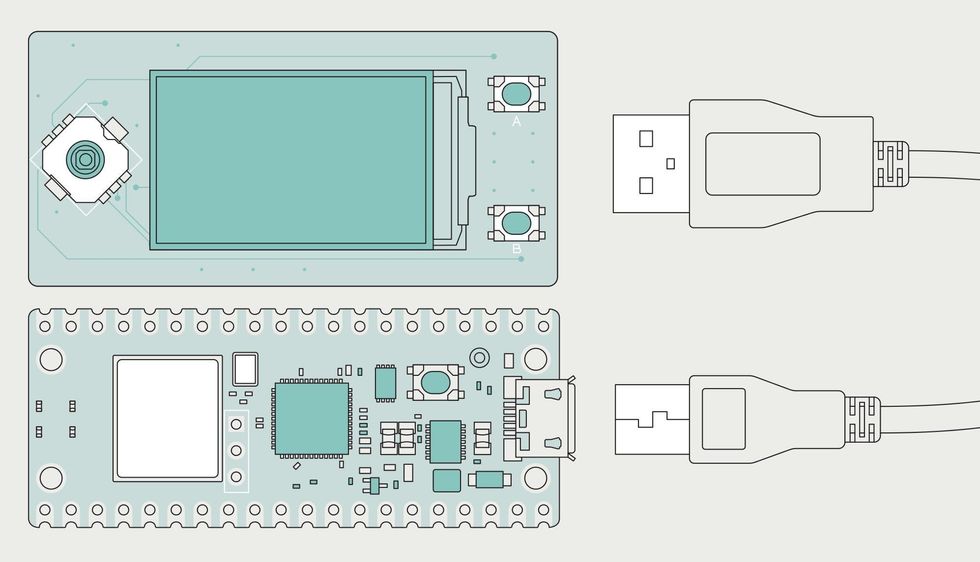

Only two hardware modules are needed–a Rasperry Pi Pico W [bottom left] that supplies the compute power and a plug-in board with a screen and simple controls [top left]. Nothing else is needed except a USB cable to supply power.James Provost

The next step was to create a container program. This program is responsible for detecting when a new version of Pong is requested via a button push and when it, sends a prompt to the OpenAI Codex, receives the results, and launches the game. The container program also sets up a hardware abstraction layer, which handles the physical connection between the Pico and the LCD/control module.

The most critical element of the whole project was creating the prompt that is transmitted to the OpenAI Codex every time we want it to spit out a new version of

Pong. The prompt is a chunk of plain text with the barest skeleton of source code—a few lines outlining a structure common to many video games, namely a list of libraries we’d like to use, and a call to process events (such as keypresses), a call to update the game state based on those events, and a call to display the updated state on the screen.

The code that comes back produces a workable Pong game about 80 percent of the time.

How to use those libraries and fill out the calls is up to the AI. The key to turning this generic structure into a

Pong game are the embedded comments—optional in source code written by humans, really useful in prompts. The comments describe the gameplay in plain English—for example, “The game includes the following classes…Ball: This class represents the ball. It has a position, a velocity, and a debug attributes [sic]. Pong: This class represents the game itself. It has two paddles and a ball. It knows how to check when the game is over.” (My container and prompt code are available on Hackaday.io) (Go to Hackaday.io to play an infinite number of Pong games with the Raspberry Pi Pico W; my container and prompt code are on the site.)

What comes back from the AI is about 300 lines of code. In my early attempts the code would fail to display the game because the version of the MicroPython

framebuffer library that works with my module is different from the framebuffer libraries the OpenAI Codex was trained on. The solution was to add the descriptions of the methods my library uses as prompt comments, for example: “def rectangle(self, x, y, w, h, c).” Another issue was that many of the training examples used global variables, whereas my initial prompt defined variables as attributes scoped to live inside individual classes, which is generally a better practice. I eventually had to give up, go with the flow, and declare my variables as global.

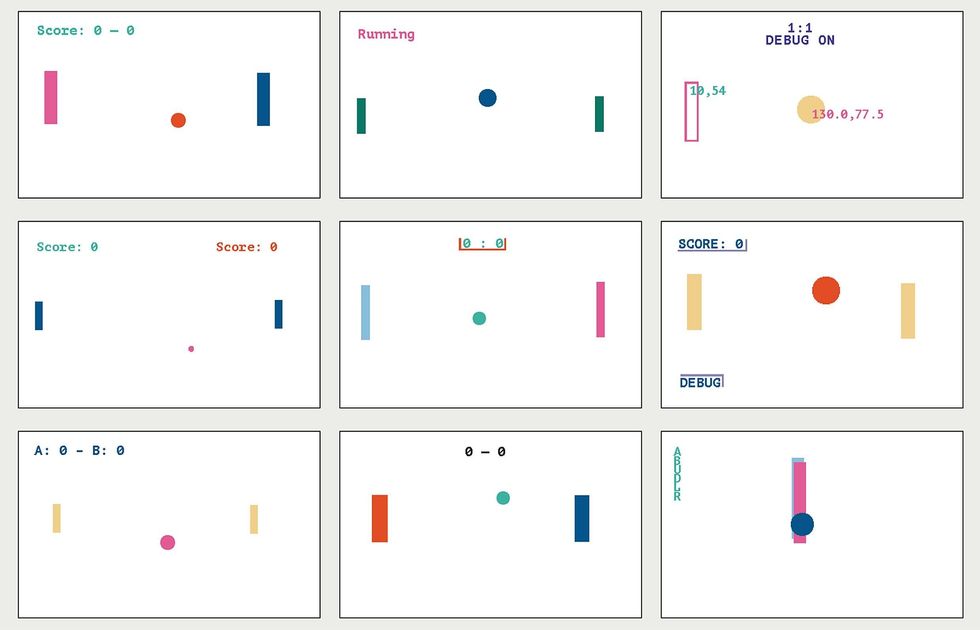

The variations of Pong created by the OpenAI Codex vary widely in ball and paddle size and color and how scores are displayed. Sometimes the code results in an unplayable game, such as at the bottom right corner, where the player paddles have been placed on top of each other.James Provost

The code that comes back from my current prompt produces a workable

Pong game about 80 percent of the time. Sometimes the game doesn’t work at all, and sometimes it produces something that runs but isn’t quite Pong, such as when it allows the paddles to be moved left and right in addition to up and down. Sometimes it’s two human players, and other times you play against the machine. Since it is not specified in the prompt, Codex takes either of the two options. When you play against the machine, it’s always interesting to see how Codex has implemented that part of code logic.

So who is the author of this code? Certainly there are

legal disputes stemming from, for example, how this code should be licensed, as much of the training set is based on open-source software that imposes specific licensing conditions on code derived from it. But licenses and ownership are separate from authorship, and with regard to the latter I believe it belongs to the programmer who uses the AI tool and verifies the results, as would be the case if you created artwork with a painting program made by a company and used their brushes and filters.

As for my project, the next step is to look at more complex games. The 1986 arcade hit

Arkanoid on demand, anyone?